Introduction

Even though the basic characteristics are the same in the human species (2 arms, 2 legs, etc.), there are certain characteristics that make us as unique as the stars in the sky. Each of us has his own genes, which are expressed to create the wonderful individuals we are. When unpacking a gift, what we are looking forward to is the contents of the box, not the paper wrap. The same applies to humans. True beauty comes from within, but, as with presents, nice wrapping really sets the mood.

Make me Stunning

Throwback

The beauty industry has become a multibillion-dollar market, with new products from major brands and celebrity lineups emerging every day. Cosmetics have been around for ages, in fact, since ancient times. Excavations have shown that ancient Egyptians used to grind minerals such as green malachite or black galena to create makeup or used red ochre to blush their cheeks. The Iliad and the Odyssey were the first sources that showed that the Greeks infused plants, flowers, spices, and fragrant woods like myrrh and oregano with oil to create fragrances (Cosmetics in the Ancient World - World History Encyclopedia). Of course, some of these preparations proved to be poisonous or just gross, so we have stopped using them, but the hunt for external beauty still goes on.

Wanna Go Shopping?

So, you decided to walk into the mall. There it is, shiny, magnificent! The cosmetics store! You hear its call, and you just need to get inside. You see rows after rows of primers, foundations, contours, blushes, lipsticks, moisturisers, shades, concealers, and it goes on and on! We know that deep down, you want to try them all, but we also know how busy you are. Here is where it gets really interesting: AI comes to aid!

If you read our article on cosmetology, you have seen how smart mirrors can analyse your characteristics to find the perfect hairstyle for you. Who said that the same can’t be done when choosing cosmetics? Companies are developing smart mirrors specifically for this purpose. These powerful tools can be the best ally for both pros who want something extra and beginners who just don’t know where to start. The device uses GPU accelerated Computer Vision (CV) to scan your face in real time. Through multiple registers of different areas, the mirror is able to identify your skin tone, eye colour and shape, hairstyle, facial characteristics and symmetry, and even skin condition to make suggestions of potential combinations that work well together or skin routine products. Of course, you won’t be convinced just because a machine says so. What if the machine could show you the result? Yes, it’s possible with an Augmented Reality (AR) try-on. Use this branch of Extended Reality (XR) to have a look at yourself wearing makeup without even putting it on!

Unfortunately, so far, there has been no collaboration between those under-development devices and cosmetics brands, but if such a thing happens, things will get even better. An established connection to a cloud server of a company will be able to not only make suggestions on colours and hues that will turn you irresistible, but it will make the device capable of giving you specific information on exactly the brand and the colour code of the product. And just when you think things can’t get better, why don’t we introduce the Internet of Things (IoT) to this? You have found the product instantaneously, yet you need to use your phone or laptop to order it? Nope! Just press a button on your smart mirror, and the product will be delivered to you. Now, maybe you don’t want to spend the extra money on a niche device. Understandable. This implementation can be done using the powerful camera your smartphone has! Now that we mentioned phones, with all these AI tools that are constantly being implemented, why not make the ‘best’ even better? Natural Language Processing (NLP) models have gone far and beyond. You have seen them in the different GPT assistants that you use to do your homework or write an email for you. This, however, is not the first time you have encountered them. Digital assistants have used NLPs for a while to extract information and form answers (you may want to recall a certain fruit phone at this point). Now, take this and use a speech-to-text-to-speech algorithm, and there you have it. Your smart mirror will finally be able to answer when you ask, ‘Mirror mirror on the wall, who is the fairest of them all?’ and even give you feedback on the selection of products that will make you even more dazzling!

Read more: AI Revolutionising Fashion & Beauty

Why me?

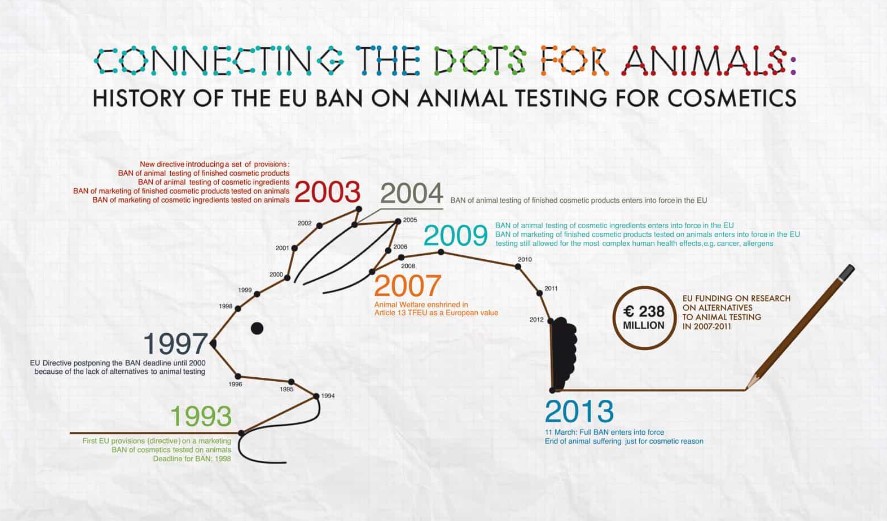

There is a dark side to the cosmetics industry that is worth discussing, and this is no other than animal cruelty. Sadly enough, major cosmetics companies continue to test their products on animals or, to comply with local regulations, perform these tests in other countries where this practice is allowed (These Beauty Brands Are Still Tested on Animals, 2015). The reason behind that is the safety of the consumers, as no company wants to get involved in litigations; nevertheless, it is not fair to perform such tests on beings that cannot express themselves. People for the Ethical Treatment of Animals (PETA) is the largest animal rights organisation in the world, with more than 9 million members and supporters worldwide.

If you are wondering whether there are ways to bypass such practices for the well-being of our four-legged friends, there is, and technology is on our side! As you know, our skin has specific characteristics, some of which are common among us, while some of us have our own peculiarities. For example, most people have what we call ‘normal skin type’, but others have ‘oily’, ‘dry’ or even a combination of the above. We already mentioned how a well-trained CV algorithm can be used to process your skin and extract useful information to analyse it. However, this is applicable not only to humans. The same algorithm (with some tweaks here and there) can be applied for the analysis of animal skin. In this way, we could predict the skin reaction before it even occurs, completely excluding the animals from the process.

But wait, if you can analyse a skin to avoid the involvement of animals, why not analyse the human skin in the first place? Well, you are absolutely right! Remember when we said earlier that each one of us is unique? Through careful planning, an enormous database representing each skin could be created and constantly enriched with fresh data. Then, millions of virtual tests could be run in local infrastructure by Edge Computing to be able to support the huge volume of information, reaching conclusions with precision that can be identical to testing on animals. This does not necessarily mean that animals are out of the equation, thankfully, for a good purpose. As humans have peculiar skins, so do animals. A similar algorithm and tests could be run to develop cosmetics and skin products for our little friends. NLPs could also be involved in such processes. You have your skin analysis, and you are using your smartphone or smart mirror to choose the perfect cosmetics, yet there are so many options that you need to apply some filters when searching. This can be done instantly through NLPs. Just say which ingredients you absolutely want or don’t want the products to contain, and there you have it! Perfect choices every time!

It’s Just a Scar

Medicine is probably one of the most respected professions out there. There is a specific branch, though, that is specifically destined to make our looks better, and that is no other than cosmetic plastic surgery. Now, don’t be fooled. Plastic surgery is not just about improving our appearance for cosmetic purposes. There are cases where the surgery has a purely functional purpose. Examples of such cases include blepharoplasty, where the eyelid interferes with vision, rhinoplasty, where the nose is optimally shaped not only to perfectly match the rest of the face but to allow proper oxygenation of the body, or even scar revision, where surgeons can minimise the visibility of scars and improve their texture, giving a more natural appearance to the skin, potentially even improving mobility and relieving discomfort.

For sure, fashion and current trends certainly have an impact on what decisions we make about all sorts of matter, including plastic surgery. For example, an 86% increase in buttock lifting has been observed in women from 2019 to 2022, according to the American Society of Plastic Surgery, while breast reduction for aesthetic purposes has seen an increase of 54%, and blepharoplasty is at 13% in the same era (Plastic Surgery Statistics).

Does it Really Matter?

It doesn’t matter at all why someone chooses to have an operation of this type. It’s your body and, therefore, your choices. However, not all surgeries have 100% success. Sometimes, even routine operations can go south, with the implications being severe. We need to be sure about our doctor, as the doctor needs to be sure of what they are doing. Isn’t that, however, a bit unfair for the less experienced? By implementing AI in the process, success is guaranteed.

The usual suspect, CV, can get to work even before the operation starts. By carefully examining the patient, the doctor can accurately decide the optimal method, the exact dosage of the filling material, or the amount of tissue that must be removed, eliminating potential errors. Or inside the operation theatre, it wouldn’t hurt to have an extra pair of computer eyes watching over the procedure or even indicating to the surgeon exactly where they need to operate through AR or Virtual Reality (VR). Most likely, you are familiar with the concept of robotic surgery, with medical devices such as the world-famous DaVinci appearing on the news every now and then. These devices are mostly designed for larger-scale operations, where much detail and precision beyond human limits are needed. However, why limit things and exclude cosmetic surgery? A medical device that can perform cosmetic surgeries would not only be beneficial but also profitable. If we enrich it with AI technology to such an extent that it can almost perform the surgery on its own, the benefits will be even greater. Maximum precision, minimum scars and rehabilitation time, optimal results, and satisfied patients!

Read more: How Augmented Reality is Transforming Beauty and Cosmetics

Summing Up

The cosmetics industry can surely benefit from AI. Algorithms such as Computer Vision and Natural Language Processing can really transform the way we interact with cosmetics, both on a private and a corporate level. Using AI, we can have our own assistant when choosing cosmetics, eliminate animal cruelty, and achieve optimal cosmetic surgery results.

What We Offer

At TechnoLynx, we are all about innovation. We specialise in providing custom-tailored tech solutions specifically for your needs. We know the benefits of integrating AI into a wide variety of industries, including cosmetics, while ensuring safety in human-machine interactions, managing and analysing large data sets, and addressing ethical considerations.

Our precise software solutions are specifically designed to empower AI-driven algorithms in different fields and industries. At TechnoLynx, we are driven to adapt to the ever-changing AI landscape. Our solutions are specifically designed to increase efficiency, accuracy, and productivity for your business. Don’t hesitate, contact us. We will be more than happy to answer any questions!

List of references

-

Admin (2024). ‘The Future of Plastic Surgery with AI and Personalized Procedures’, Small business articles and business insurance information, 11 January. (Accessed: 27 March 2024).

-

Cosmetics (no date). Understanding Animal Research.

-

Cosmetics in the Ancient World (no date). World History Encyclopedia. (Accessed: 27 March 2024).

-

Med, I.L. with the (2018). ‘Make Up and Beauty in Ancient Greece’, in Love with the Med, 17 September. (Accessed: 27 March 2024).

-

Plastic Surgery Statistics (no date). American Society of Plastic Surgeons. (Accessed: 27 March 2024).

-

Smart Makeup Mirror: The Complete Guide 2024 (2024). PerfectCorp. (Accessed: 27 March 2024).

-

These Beauty Brands Are Still Tested on Animals (2015). PETA. (Accessed: 27 March 2024).