Visual analytic intelligence brings clarity to complex models. Teams use it to see how neural networks make decisions. The goal stays simple. Show what a learning model learns and why it predicts a result.

Many ideas take cues from the human brain, yet they rely on maths and code, not biology (LeCun et al., 2015; Goodfellow et al., 2016). Clear graphics and interactive visuals support this work. Experts review visualised data in real time and act with confidence (Olah et al., 2018; Ribeiro et al., 2016).

From raw signals to insight

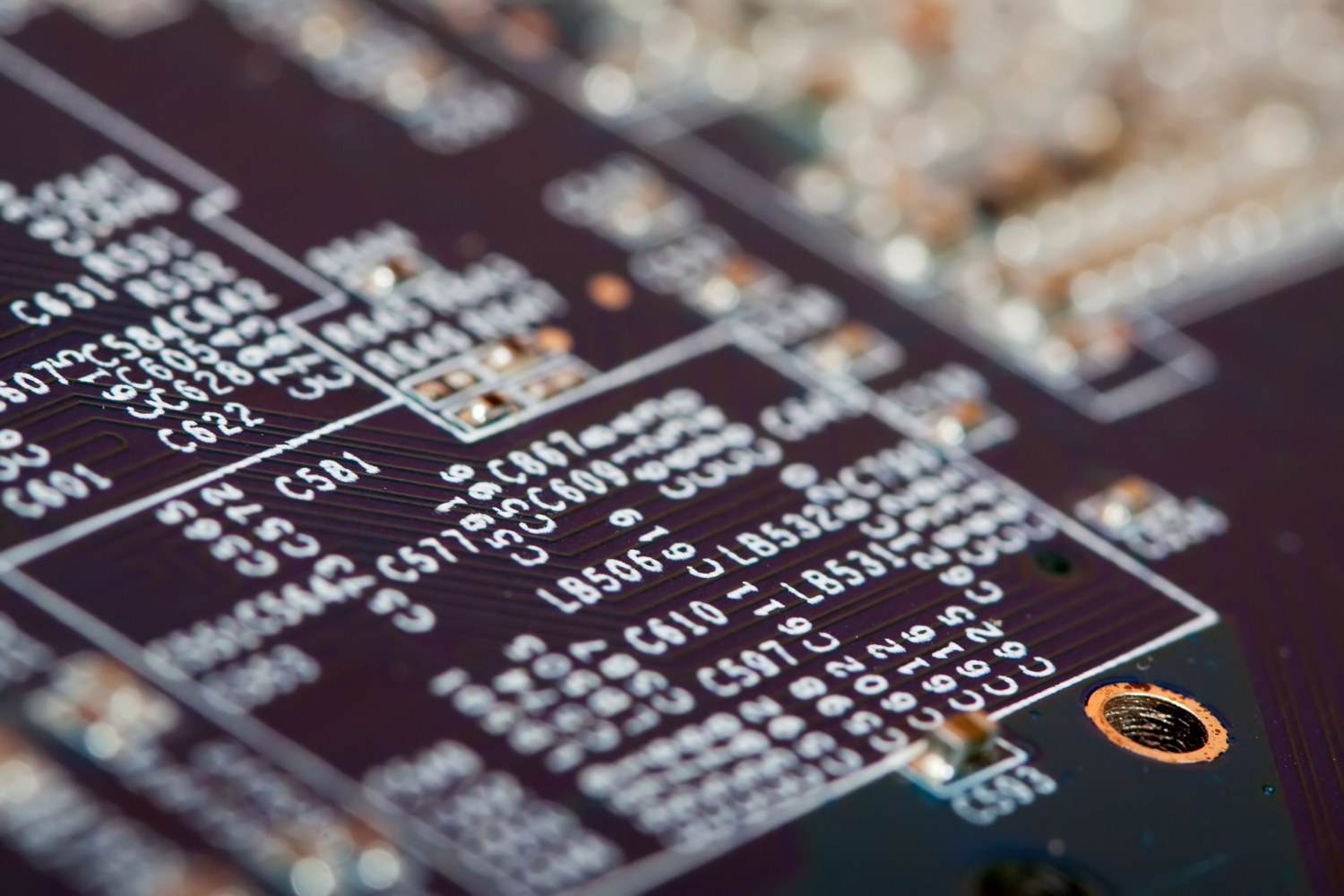

Every project starts with a data set. Teams define a task and collect training data. They clean it. They tag it. They check quality. Strong data improves the learning model and cuts risk later (Sculley et al., 2015; Mitchell, 1997).

Analysts then build a pipeline for data analysis. They track input features and the output layer. They keep runs reproducible. They show each step with visualised data so people can trust the process (Amershi et al., 2019; Krishnan and Wu, 2017).

Visual analytic intelligence links people with models. Screens show real time curves, histograms, and saliency maps. Plots flag drift or bias before damage spreads. Teams stay data driven through the full cycle, from lab tests to live systems (Ribeiro et al., 2016; Biewald, 2020).

Read more: Visual Computing in Life Sciences: Real-Time Insights

Neural network architectures in practice

Different goals need different neural network architectures. Convolutional neural networks process images and spatial patterns with great speed. Recurrent neural networks track order and time. A multilayer perceptron handles tabular features and compact signals (LeCun et al., 2015; Hochreiter and Schmidhuber, 1997).

An artificial neural network stacks layers, each with activation functions that shape signals. The output layer encodes a class, a score, or a numeric value (Goodfellow et al., 2016; Glorot et al., 2011).

Visual analytic intelligence helps teams compare designs. People view filters, gates, and attention maps. They judge what each block adds. They drop parts that add little value. They keep parts that lift accuracy or stability. Clear views reduce guesswork and speed iteration (Olah et al., 2018; Zeiler and Fergus, 2014).

Seeing how convolution works

Convolutional neural networks use filters that slide across pixels. Early layers learn edges. Later layers learn shapes and textures. Final layers learn task-specific cues.

Visual tools can show what each filter responds to. Teams view heatmaps on the original image. The maps guide fixes to data and labels (Zeiler and Fergus, 2014; Simonyan et al., 2014).

Grad-CAM and related methods highlight regions that drive a score. Engineers check if the network looks at the right spot. If a model looks at a watermark, not the object, they spot it fast. They then fix the data set or adjust the loss (Selvaraju et al., 2017; Adebayo et al., 2018).

Understanding sequence and memory

Recurrent neural networks model signals that change with time. They handle speech, sensors, and text. LSTM and GRU variants learn long links. Visual analytic intelligence shows gate values, memory cells, and attention over steps.

Plots make failure modes obvious. Teams can see vanishing gradients or stuck states and tune the learning rate or depth (Hochreiter and Schmidhuber, 1997; Cho et al., 2014).

Classic blocks still matter

A multilayer perceptron remains useful. Many systems still rely on dense layers with simple activation functions. Engineers test ReLU, GELU, or tanh and watch calibration over epochs.

Small changes can lift stability in noisy data (Glorot et al., 2011; Hendrycks and Gimpel, 2016). Visual dashboards show loss, accuracy, and calibration curves. Stakeholders grasp progress at a glance.

Read more: AI-Driven Aseptic Operations: Eliminating Contamination

From theory to working systems

Visual analytic intelligence supports end‑to‑end data analysis. Teams wire input features to the model and watch outputs change. They test stress cases. They add noise or occlude parts of an image. They track results with interactive visuals. The work stays data driven, not gut feel (Ribeiro et al., 2016; Doshi-Velez and Kim, 2017).

Clear views help with audits. People can show how the model treats each group in the data set. They can also show steps that reduced bias. Auditors see the links from inputs to decisions. Trust grows when reviewers see transparent evidence (Mitchell et al., 2019; Selbst and Barocas, 2018).

Input, output, and useful signals

Neural networks map inputs to outputs through layers. The output layer turns internal states into classes or scores. Plots of logits, margins, and probabilities reveal overconfidence. Teams adjust loss functions and temperature scaling to improve calibration (Guo et al., 2017; Niculescu-Mizil and Caruana, 2005).

People also inspect intermediate layers. They project embeddings with t‑SNE or UMAP. They check if classes cluster well. If clusters overlap, they collect more training data or rethink features (Van der Maaten and Hinton, 2008; McInnes et al., 2018).

Real-time systems and computational power

Real-time services need speed and reliability. Teams size computational power for peak loads. They profile inference, trim layers, and quantise weights.

They cache parts of the graph. They prune channels that add little value (Han et al., 2016; Jacob et al., 2018). Visual dashboards show latency and throughput per model version. Engineers react before users feel lag.

Edge devices need compact models. People deploy distilled students of larger teachers. They keep accuracy steady while cutting memory and energy. Visual tools show the trade‑offs so leaders can choose the right balance for cost and quality (Hinton et al., 2015; Howard et al., 2017).

Read more: AI Visual Quality Control: Assuring Safe Pharma Packaging

Designing a learning model that people can trust

Strong models come from strong process. Teams write clear goals and collect a representative data set. They log data issues and label drift. They track lineage from raw files to features. Visual analytic intelligence turns this work into shared views. Everyone sees the same facts (Amershi et al., 2019; Sculley et al., 2015).

People test variants of neural network architectures under the same conditions. They fix seeds. They control data splits. They compare metrics with confidence bounds.

Plots show when a small gain is noise. This discipline saves time and money (Dodge et al., 2019; Lipton and Steinhardt, 2019).

Activation functions and learning stability

Activation functions shape how signals move. ReLU brings sparse signals and simple gradients. GELU smooths edges and often lifts accuracy. Swish gives similar gains in some tasks. Visual tools show gradient norms and activation saturations. Engineers cut dead units and prevent exploding values (Glorot et al., 2011; Hendrycks and Gimpel, 2016).

Batch norm and layer norm also help. Plots show their effect on convergence. If curves wobble, teams tune momentum or epsilon. Small steps here can deliver steady training (Ioffe and Szegedy, 2015; Ba et al., 2016).

Making sense of predictions

Visual analytic intelligence does not stop at accuracy. People need reasons. Saliency, integrated gradients, and occlusion tests give hints. LIME and SHAP provide local views for single cases. Combined views often give the clearest story. One view rarely covers all cases (Simonyan et al., 2014; Lundberg and Lee, 2017).

Engineers present interactive visuals to product teams and domain experts. Doctors, analysts, or operators ask questions and get live answers. Sessions surface edge cases that static reports miss. Teams then refine labels, fix bugs, or add features (Ribeiro et al., 2016; Wexler et al., 2019).

Read more: Cell Painting: Fixing Batch Effects for Reliable HCS

Data driven evaluation beyond a single score

Top‑1 accuracy can hide issues. Teams track precision, recall, AUC, and calibration. They also slice by cohort to catch hidden gaps. Plots make gaps visible and hard to ignore. Leaders can then align targets with real‑world risk (Saito and Rehmsmeier, 2015; Guo et al., 2017).

Robustness also matters. People test shifts in lighting, angle, or noise. They simulate corruptions and measure drops. Dashboards show which corruptions hurt most. Teams then harden the model with augmentations or new data (Hendrycks and Dietterich, 2019; Geirhos et al., 2019).

Closing the loop after launch

Models change after launch. Users change. Data changes. Visual analytic intelligence keeps pace. Teams watch concept drift and data drift in real time. They set alerts for spikes in failure rates. They roll back or roll forward with canary releases (Schmidt et al., 2021; Breck et al., 2017).

A feedback loop sends hard cases back into the training data. People label them and retrain. Plots show gains on live cohorts, not just lab splits. This cycle keeps accuracy high and maintains trust (Amershi et al., 2019; Wexler et al., 2019).

Education and team culture

Visual methods help teams learn. New members grasp models faster when they see interactive visuals. Senior staff spot weak spots early. Meetings become shorter and more focused. Pictures of failure cases cut debate and align action (Krause et al., 2016; Hohman et al., 2019).

Leaders also use visuals to guide decisions. They see risk beside reward. They approve changes with a clear view of impact. Good visuals drive good governance.

Limits and honest communication

No method gives full truth. Saliency can mislead. Gradients can saturate. A neat plot can hide a brittle edge. Teams must avoid overconfidence. They should cross‑check with multiple methods and keep humans in the loop (Adebayo et al., 2018; Rudin, 2019).

Fairness needs care too. A model can pass broad tests and still fail a group. Slices and audits help. Clear plots make gaps visible. Teams then fix causes, not symptoms (Mitchell et al., 2019; Selbst and Barocas, 2018).

Read more: Pharma’s EU AI Act Playbook: GxP‑Ready Steps

A quick guide to key building blocks

-

Artificial neural network: a stack of layers that turn features into predictions.

-

Convolutional neural networks: models for images and spatial data.

-

Recurrent neural networks: models for sequences and time.

-

Multilayer perceptron: a dense feed‑forward baseline.

-

Activation functions: rules that shape signals in each unit.

-

Output layer: the final mapping to a class, score, or value.

Each block benefits from clear plots and careful tests. Teams build confidence when they see how parts work together (Goodfellow et al., 2016; LeCun et al., 2015).

Odd terms that still need attention

Some teams document “input and outputneural networks” as a single phrase in legacy notes. The phrase looks odd, yet it points at a real need. People must define inputs and outputs early and keep them stable through the project. Strong names and clear schemas stop silent bugs and make hand‑offs smoother.

Read more: Validation‑Ready AI for GxP Operations in Pharma

TechnoLynx: Turning insight into action

TechnoLynx designs visual analytic intelligence workflows that fit real teams. We build interactive visuals that show how models behave in real time. We support image, text, and tabular tasks with data driven dashboards that link training data to the output layer and back.

Our engineers tune neural network architectures for the job. We test convolutional neural networks, recurrent neural networks, and a multilayer perceptron when it makes sense.

We choose activation functions that keep gradients stable. We size computational power for cost and speed. We also wire alerts for drift and quality so your staff can react fast.

Our solutions fit regulated settings and high‑stakes use. We log each run, track the learning model through its life, and keep visualised data clear and auditable. We help you ship models that your experts can trust and your leaders can defend. If you need a clean path from idea to value, our team can guide that path with clear methods and practical code.

Interested to learn more? Contact us today to start exploring possible scenarios!

References

-

Adebayo, J. et al. (2018) ‘Sanity checks for saliency maps’, NeurIPS, pp. 9505–9515.

-

Amershi, S. et al. (2019) ‘Guidelines for human‑AI interaction’, CHI, pp. 1–13.

-

Ba, J.L., Kiros, J.R. and Hinton, G.E. (2016) ‘Layer normalization’, arXiv:1607.06450.

-

Biewald, L. (2020) ‘Experiment tracking with MLflow’, O’Reilly Radar, pp. 1–6.

-

Breck, E. et al. (2017) ‘The ML test score: A rubric for ML production readiness’, MLSys Workshop.

-

Cho, K. et al. (2014) ‘Learning phrase representations using RNN encoder–decoder’, EMNLP, pp. 1724–1734.

-

Dodge, S. et al. (2019) ‘Show me a better label’, NeurIPS, pp. 1–11.

-

Doshi‑Velez, F. and Kim, B. (2017) ‘Towards a rigorous science of interpretable ML’, arXiv:1702.08608.

-

Geirhos, R. et al. (2019) ‘ImageNet-trained CNNs are biased towards texture’, ICLR, pp. 1–16.

-

Glorot, X., Bordes, A. and Bengio, Y. (2011) ‘Deep sparse rectifier neural networks’, AISTATS, pp. 315–323.

-

Goodfellow, I., Bengio, Y. and Courville, A. (2016) Deep Learning. MIT Press.

-

Guo, C. et al. (2017) ‘On calibration of modern neural networks’, ICML, pp. 1321–1330.

-

Han, S. et al. (2016) ‘Deep compression: Compressing deep neural networks’, ICLR.

-

He, K. et al. (2016) ‘Deep residual learning for image recognition’, CVPR, pp. 770–778.

-

Hendrycks, D. and Dietterich, T. (2019) ‘Benchmarking neural network robustness to common corruptions’, ICLR.

-

Hendrycks, D. and Gimpel, K. (2016) ‘Gaussian error linear units’, arXiv:1606.08415.

-

Hinton, G., Vinyals, O. and Dean, J. (2015) ‘Distilling the knowledge in a neural network’, NIPS Workshop.

-

Hochreiter, S. and Schmidhuber, J. (1997) ‘Long short‑term memory’, Neural Computation, 9(8), pp. 1735–1780.

-

Hohman, F. et al. (2019) ‘Gamut: A design probe for ML model visualization’, TVCG, 25(1), pp. 1–11.

-

Howard, A.G. et al. (2017) ‘MobileNets: Efficient CNNs for mobile vision’, arXiv:1704.04861.

-

Ioffe, S. and Szegedy, C. (2015) ‘Batch normalization’, ICML, pp. 448–456.

-

Jacob, B. et al. (2018) ‘Quantization and training of neural networks for efficient inference’, CVPR, pp. 2704–2713.

-

Krause, J. et al. (2016) ‘Interacting with predictions: Visualizing uncertainty’, CHI, pp. 1–5.

-

Krishnan, S. and Wu, E. (2017) ‘AlphaClean: Automatic generation of data cleaning pipelines’, SIGMOD, pp. 1–16.

-

LeCun, Y., Bengio, Y. and Hinton, G. (2015) ‘Deep learning’, Nature, 521, pp. 436–444.

-

Lipton, Z.C. and Steinhardt, J. (2019) ‘Troubling trends in ML scholarship’, Queue, 17(1), pp. 45–77.

-

Lundberg, S.M. and Lee, S.‑I. (2017) ‘A unified approach to interpreting model predictions’, NeurIPS, pp. 4765–4774.

-

McInnes, L., Healy, J. and Melville, J. (2018) ‘UMAP: Uniform manifold approximation and projection’, arXiv:1802.03426.

-

Mitchell, T. (1997) Machine Learning. McGraw‑Hill.

-

Mitchell, M. et al. (2019) ‘Model cards for model reporting’, FAT Conference, pp. 220–229.

-

Niculescu‑Mizil, A. and Caruana, R. (2005) ‘Predicting good probabilities with supervised learning’, ICML, pp. 625–632.

-

Olah, C., Satyanarayan, A. and Johnson, I. (2018) ‘Feature visualization’, Distill, 3(7).

-

Ribeiro, M.T., Singh, S. and Guestrin, C. (2016) ‘“Why should I trust you?” Explaining predictions of any classifier’, KDD, pp. 1135–1144.

-

Rudin, C. (2019) ‘Stop explaining black box models for high stakes decisions’, Nature Machine Intelligence, 1, pp. 206–215.

-

Saito, T. and Rehmsmeier, M. (2015) ‘The precision‑recall plot is more informative than ROC plot’, PLOS One, 10(3), e0118432.

-

Schmidt, P. et al. (2021) ‘Data drift detection and monitoring in ML systems’, VLDB Workshop.

-

Selvaraju, R.R. et al. (2017) ‘Grad‑CAM: Visual explanations from deep networks’, ICCV, pp. 618–626.

-

Selbst, A.D. and Barocas, S. (2018) ‘The intuitive appeal of explainable machines’, Fordham L. Rev., 87, pp. 1085–1139.

-

Simonyan, K., Vedaldi, A. and Zisserman, A. (2014) ‘Deep inside convolutional networks: Visualising image classification models’, ICLR.

-

Van der Maaten, L. and Hinton, G. (2008) ‘Visualizing data using t‑SNE’, JMLR, 9, pp. 2579–2605.

-

Wexler, J. et al. (2019) ‘The What‑If Tool: Interactive probing of ML models’, IEEE TVCG, 26(1), pp. 56–65.