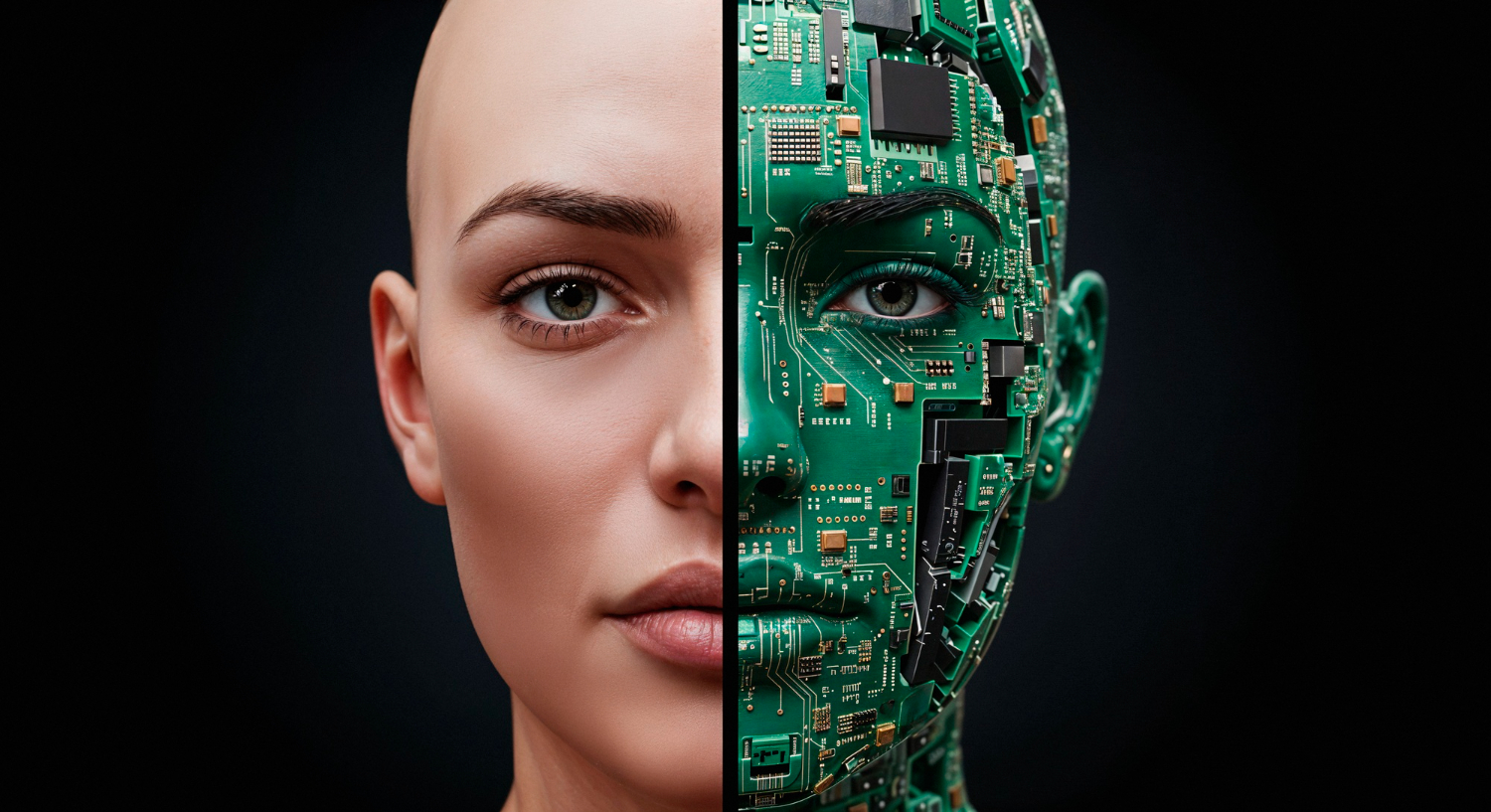

Engineers keep asking a simple question with hard consequences: if humans read scenes so well, how can machines approach that standard without guesswork or brittle tricks? They study human vision, they test computer vision, and they track gaps with care (Palmer, 1999; Szeliski, 2022). Human eyes contain light sensitive cells that trigger spikes; the optic nerve carries those spikes to cortex; higher areas fuse edges, textures, motion, and context into stable meaning (Kandel et al., 2013; Wandell, 1995). Teams treat that flow as a template. They build computer vision systems that turn visual information into decisions with repeatable steps—image processing, feature extraction, and, when the task requires higher capacity, a neural network that scales with data and constraints (Marr, 1982; Gonzalez and Woods, 2018).

How computer vision works when stakes run high

Strong pipelines start with digital images or images or videos that sensors capture under controlled light. Engineers correct exposure, reduce noise, and align frames. They then extract features that actually move the needle—corners, gradients, keypoints—so later stages see structure, not clutter (Lowe, 2004; Dalal and Triggs, 2005). When the scene shifts fast or the class space grows, teams fit a deep learning model that learns features and tasks together; they select an architecture that fits the data set size, the failure cost, and the compute budget, and they validate results with slice‑level metrics, not a single score (Goodfellow, Bengio and Courville, 2016; Szeliski, 2022).

You want evidence that computer vision works beyond toy demos. Hospitals rely on medical imaging aides that flag suspicious regions and measure change across visits; warehouses drive inventory management through automated counting, label reading, and flow tracking; factories run quality control with cameras that never tire and rules that never drift off shift (Litjens et al., 2017; Liu et al., 2018).

Biology still guides design

Human vision runs a layered playbook. Retinal circuits compress and route signals; cortical fields ramp from local edges to complex shapes; expectations steer perception under noise. Engineers copy those moves where they help. They compress early to cut bandwidth. They combine fine and coarse views to stabilise recognition. They fold temporal cues into inference so a model holds state rather than flailing on a single frame (Kandel et al., 2013; Serre, 2014).

This mimicry stays pragmatic. Teams do not chase biology for romance; they copy it when results improve. They test designs in the field and keep the ones that cut error without bloating compute (Szeliski, 2022; He et al., 2016).

Read more: Visual analytic intelligence of neural networks

From pixels to semantics with CNNs and friends

Convolutional neural networks (CNNs) act like learned filter banks. Early layers catch edges and simple textures; deeper layers code parts and object templates; final heads produce boxes, masks, or classes. Shared weights give translation tolerance and solid throughput. Engineers pick detectors such as Faster R‑CNN or YOLO when they need object detection at speed; they pair the head with careful pre‑processing and hard‑negative mining so recall holds in glare, dust, or motion (Ren et al., 2015; Redmon et al., 2016).

Some tasks need text, not shapes. Optical character recognition (OCR) turns pixels into strings. Teams stabilise the crop with robust image analysis, then decode sequences with a deep learning model that handles warped baselines, compressed fonts, and shadow bands. They log confidence and fall back to human review when the risk warrants it (Smith, 2007; Breuel, 2013).

Why context decides wins and losses

Humans bind objects to scenes. A tool on a bench means one thing; the same tool in a surgical field means another. Computer vision systems gain similar stability when they fuse local cues with context. Engineers add multi‑scale features, scene priors, and short temporal windows; they also stack rules that encode site policies, so the model never acts alone in high‑risk settings (Johnson et al., 2015; Amodei et al., 2016).

You can watch this play out in production. A detector that spots bottles on a line will miss fewer defects when it reads lot codes with OCR and checks fill lines with a secondary head; a triage aid in radiology will cut turnaround when it pairs lesion masks with series‑level consistency checks (Litjens et al., 2017; He et al., 2017).

Data, labels, and the grind that creates signal

Results depend on the data set. Teams shoot under real lenses and shifts. They capture seasons, shifts, and rare faults. They label with double review. They track lineage from raw frames through augmentation to train splits, and they store footage for audits. This grind sets the ceiling for any deep learning model you deploy (Recht et al., 2019; Geirhos et al., 2019).

They also keep humans close to edge cases. Domain experts flag artefacts in medical imaging; line operators tag tricky defects under mixed light; stock managers note new packaging that breaks OCR. Engineers feed those notes back into the loop and measure gains on live cohorts, not just lab splits (Campanella et al., 2019; Wexler et al., 2019).

Optics and photons still matter

You cannot fix every optical mistake in software. Teams choose lenses and lights with care. They control polarisation, flicker, and reflectance. They set up HDR capture for scenes with harsh highlights, and they stabilise motion where possible so feature extraction stays honest. Good photons make good pixels; good pixels support clean image processing; clean processing accelerates everything downstream (Hasinoff et al., 2016; Gonzalez and Woods, 2018).

Read more: Visual Computing in Life Sciences: Real-Time Insights

Real‑time systems that act, not just report

Many sites need instant action. Engineers size computational power for peak load. They prune layers, quantise weights, and batch requests. They deploy on edge boxes near cameras to cut latency and reduce backhaul. They send summaries upstream and keep raw frames only when policy requires retention. Dashboards show tail latencies and error spikes so staff intervene before customers feel pain (Han et al., 2016; Jacob et al., 2018).

Factories, depots, and wards differ, yet one pattern holds: people trust systems that respond in time and fail safe. Engineers wire rules that halt the line when confidence drops, not after scrap piles up. They also log every decision with reasons, so auditors can replay tough calls without guesswork (Amodei et al., 2016; Mitchell et al., 2019).

Three domains, one pattern of value

Medical imaging. Radiology teams sift scans at scale. Vision aides segment anatomy, highlight outliers, and rank studies so clinicians review the right cases first. Doctors keep final judgement, yet they work faster and with more context, and they document decisions with overlays that hold up in review (Litjens et al., 2017; Lundervold and Lundervold, 2019).

Inventory management. Cameras read pallets, cartons, and totes; detectors track flows; OCR reads labels; planners reconcile counts against orders and forecast gaps. Managers act before a missing input stalls production (Liu et al., 2018; Redmon et al., 2016).

Quality control. Lines need zero‑defect ambition with credible enforcement. Computer vision technologies watch geometry, colour, print quality, and surface finish. Systems flag defects within milliseconds, route items for rework, and keep scrap low even when shifts change and light drifts (He et al., 2016; Szeliski, 2022).

A short, plain map from biology to code

-

Human vision starts with light sensitive receptors; the optic nerve carries coded spikes; cortex fuses features with context (Kandel et al., 2013; Wandell, 1995).

-

Computer vision starts with sensors; image processing reduces noise; feature extraction and a neural network produce actions. You then judge if computer vision works under your constraints, not just on a benchmark (Marr, 1982; Szeliski, 2022).

Training and evaluation without illusions

Teams write clear contracts for success. They define costs for false positives and false negatives. They measure precision, recall, calibration, and drift by cohort. They test across glare, blur, and occlusion. They keep a canary route for new models and roll back when live metrics dip. They also talk straight about limits, since overconfidence creates risk that no dashboard can erase (Saito and Rehmsmeier, 2015; Hendrycks and Dietterich, 2019).

Read more: AI-Driven Aseptic Operations: Eliminating Contamination

Words, models, and the odd keyword you still meet

Industry shorthand can look odd. A spec might say “human vision neural network” in one line and mean “compare human vision to a neural network” in context. Engineers translate these fragments into clear tests: they align datasets, they compare error on natural scenes, and they report gaps with examples, not just decimals (Goodfellow, Bengio and Courville, 2016; Szeliski, 2022).

When stakeholders ask for “computer vision systems that handle images or videos”, teams reply with specifics. They show detectors that handle both streams, trackers that stabilise identity over motion, and OCR that reads under shake. They also present latency budgets and accuracy bands that match the site, not a lab (Redmon et al., 2016; Ren et al., 2015).

Tool choices that respect constraints

Some sites run small models by design. A compact deep learning model on an edge box can outperform a bloated stack that times out. Others need hybrid logic. Engineers glue classic features to learned heads so the system stays robust when labels remain scarce or shifts strike hard. They pick tools that fit risks and resources, not fashion (Lowe, 2004; Dalal and Triggs, 2005).

Governance that earns trust

Vision systems touch privacy, safety, and jobs. Leaders need clear logs and repeatable audits. Teams document datasets, training recipes, and release notes. They attach saliency maps or example frames to tough calls. They keep human review for high‑risk actions and publish thresholds that staff can defend. Firms that run this playbook earn trust from clinicians, operators, and regulators alike (Doshi‑Velez and Kim, 2017; Mitchell et al., 2019).

TechnoLynx: Turning sight into sound decisions

TechnoLynx builds computer vision that stands up in clinics, plants, and depots. We design pipelines that join sound image processing with focused feature extraction and a neural network that fits your case. We ship OCR that reads difficult labels, object detection that tracks flows, and image analysis that keeps medical imaging steady across scanners. We size computational power for real‑time needs and cost limits.

We validate on your data set, not a generic corpus, and we review failure cases with your experts until both sides trust the result. We also wire dashboards that show when computer vision works and when humans must step in. If you need computer vision technologies that mirror human vision while they respect your site constraints, our team can guide the work and deliver code your staff can run and improve.

Contact us now to start exploring our custom solutions!

Read more: AI Visual Quality Control: Assuring Safe Pharma Packaging

References

-

Amodei, D. et al. (2016) ‘Concrete problems in AI safety’, arXiv:1606.06565.

-

Breuel, T.M. (2013) ‘High performance text recognition using a hybrid HMM/maximum entropy sequence classifier’, ICDAR, pp. 1249–1254.

-

Campanella, G. et al. (2019) ‘Clinical‑grade computational pathology using weakly supervised deep learning on whole‑slide images’, Nature Medicine, 25, pp. 1301–1309.

-

Dalal, N. and Triggs, B. (2005) ‘Histograms of oriented gradients for human detection’, CVPR, pp. 886–893.

-

Doshi‑Velez, F. and Kim, B. (2017) ‘Towards a rigorous science of interpretable machine learning’, arXiv:1702.08608.

-

Geirhos, R. et al. (2019) ‘ImageNet‑trained CNNs are biased towards texture’, ICLR.

-

Gonzalez, R.C. and Woods, R.E. (2018) Digital Image Processing. 4th edn. Pearson.

-

Goodfellow, I., Bengio, Y. and Courville, A. (2016) Deep Learning. MIT Press.

-

Han, S. et al. (2016) ‘Deep compression: Compressing deep neural networks with pruning, trained quantization and Huffman coding’, ICLR.

-

Hasinoff, S.W. et al. (2016) ‘Burst photography for high dynamic range and low‑light imaging on mobile cameras’, SIGGRAPH Asia, 35(6), pp. 192:1–192:12.

-

He, K., Gkioxari, G., Dollár, P. and Girshick, R. (2017) ‘Mask R‑CNN’, ICCV, pp. 2961–2969.

-

He, K., Zhang, X., Ren, S. and Sun, J. (2016) ‘Deep residual learning for image recognition’, CVPR, pp. 770–778.

-

Johnson, J., Krishna, R., Stark, M. et al. (2015) ‘Image retrieval using scene graphs’, CVPR, pp. 3668–3678.

-

Jacob, B. et al. (2018) ‘Quantization and training of neural networks for efficient integer‑arithmetic‑only inference’, CVPR, pp. 2704–2713.

-

Kandel, E.R., Schwartz, J.H., Jessell, T.M. et al. (2013) Principles of Neural Science. 5th edn. McGraw‑Hill.

-

Liu, W. et al. (2018) ‘An intelligent warehouse management system based on machine vision’, Procedia CIRP, 72, pp. 1089–1094.

-

Litjens, G. et al. (2017) ‘A survey on deep learning in medical image analysis’, Medical Image Analysis, 42, pp. 60–88.

-

Lowe, D.G. (2004) ‘Distinctive image features from scale‑invariant keypoints’, IJCV, 60(2), pp. 91–110.

-

Lundervold, A.S. and Lundervold, A. (2019) ‘An overview of deep learning in medical imaging focusing on MRI’, Z Med Phys, 29(2), pp. 102–127.

-

Marr, D. (1982) Vision. W.H. Freeman.

-

Mitchell, M. et al. (2019) ‘Model cards for model reporting’, FAT Conference, pp. 220–229.

-

Palmer, S.E. (1999) Vision Science: Photons to Phenomenology. MIT Press.

-

Redmon, J., Divvala, S., Girshick, R. and Farhadi, A. (2016) ‘You Only Look Once: Unified, real‑time object detection’, CVPR, pp. 779–788.

-

Recht, B., Roelofs, R., Schmidt, L. and Shankar, V. (2019) ‘Do ImageNet classifiers generalize to ImageNet?’, ICML, pp. 5389–5400.

-

Ren, S., He, K., Girshick, R. and Sun, J. (2015) ‘Faster R‑CNN: Towards real‑time object detection with region proposal networks’, NeurIPS, 28, pp. 91–99.

-

Saito, T. and Rehmsmeier, M. (2015) ‘The precision‑recall plot is more informative than the ROC plot’, PLOS ONE, 10(3), e0118432.

-

Serre, T. (2014) ‘Hierarchical models of the visual system’, Scholarpedia, 9(6), 4249.

-

Szeliski, R. (2022) Computer Vision: Algorithms and Applications. 2nd edn. Springer.

-

Wandell, B.A. (1995) Foundations of Vision. Sinauer.

-

Wexler, J. et al. (2019) ‘The What‑If Tool: Interactive probing of ML models’, IEEE TVCG, 26(1), pp. 56–65.

-

Image credits: Freepik